DuetG AI Connector

| 开发者 | duetg |

|---|---|

| 更新时间 | 2026年4月17日 22:07 |

| PHP版本: | 7.4 及以上 |

| WordPress版本: | 7.0 |

| 版权: | GPL-2.0-or-later |

| 版权网址: | 版权信息 |

详情介绍:

- Ollama(本地 AI)

- LM Studio(本地 AI)

- MiniMax

- Moonshot

- DeepSeek

- SiliconFlow

- 以及任意其他 OpenAI 兼容的 API 提供商

- Alt Text Generation - Generates descriptive alt text for images using AI vision models (requires VLM model)

- Content Classification - AI-powered suggestions for post tags and categories based on content analysis

- Content Summarization - Summarizes long-form content into digestible overviews

- Excerpt Generation - Generates excerpt suggestions from content

- Image Generation - Generate featured images and inline images using AI

- Image Prompt Generation - Generates a prompt from post content that can be used to generate an image

- Meta Description Generation - Generates meta description suggestions and integrates with various SEO plugins

- Review Notes - Reviews post content block-by-block and adds Notes with suggestions for Accessibility, Readability, Grammar, and SEO

- Title Generation - Generates title suggestions from content

- Content Classification

- Content Summarization

- Excerpt Generation

- Image Prompt Generation

- Meta Description Generation

- Review Notes

- Title Generation Vision-Language Models (VLM) additionally support:

- Alt Text Generation (analyzes image content) Image Generation Endpoint:

- Image Generation (featured images and inline images) 注意:仅 Alt Text 生成需要 VLM。所有其他功能均可使用标准纯文本模型。

安装:

- Upload the

duetg-ai-connectorfolder to the/wp-content/plugins/directory - 通过 WordPress 的"插件"菜单激活插件

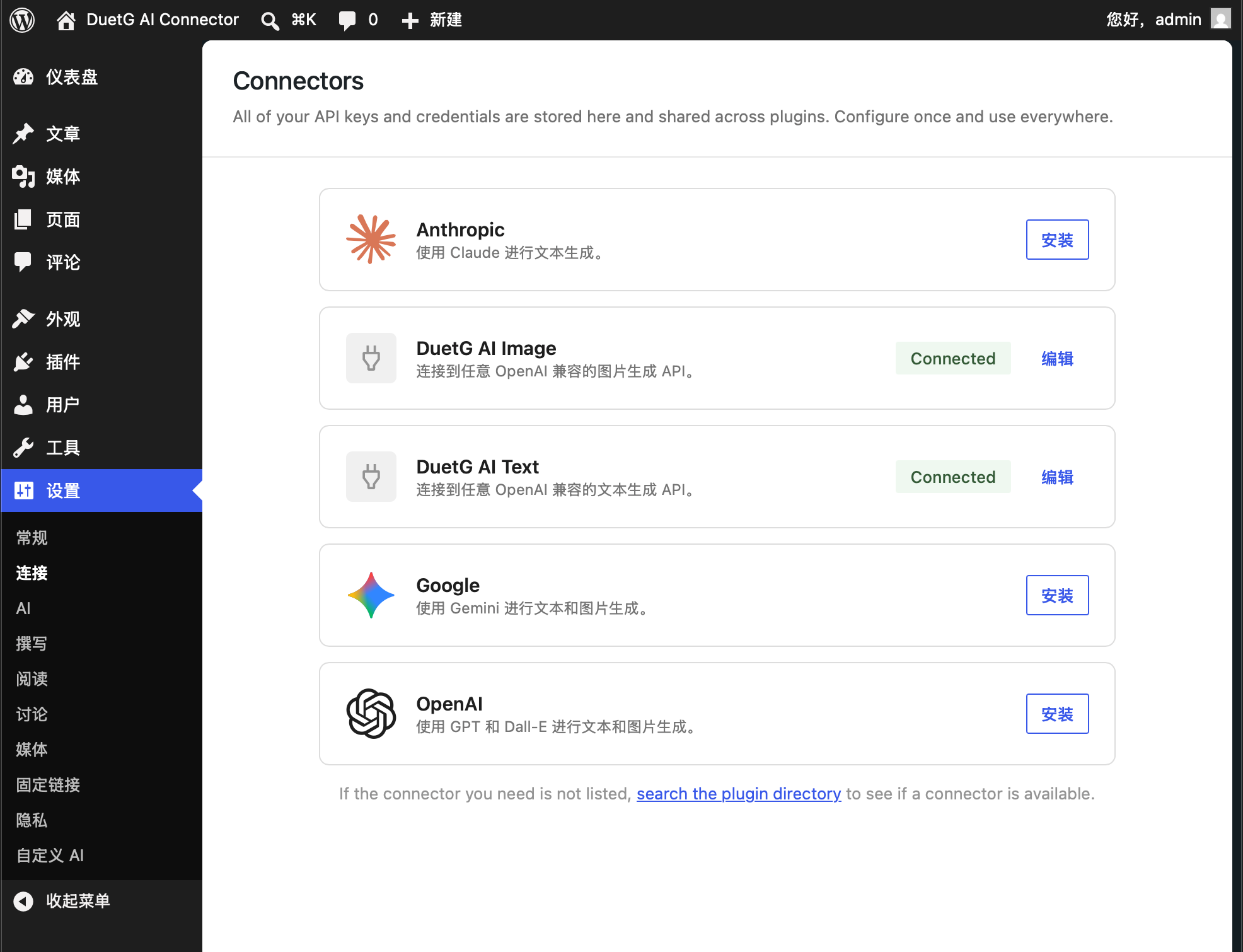

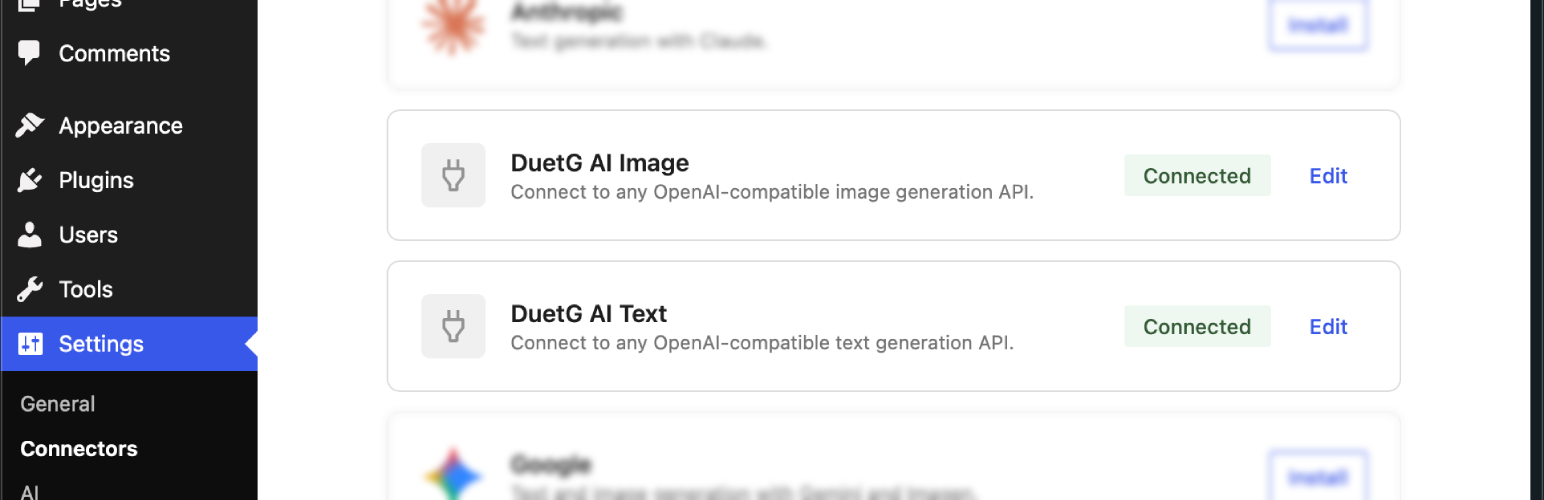

- Configure your API key at Settings > Connectors

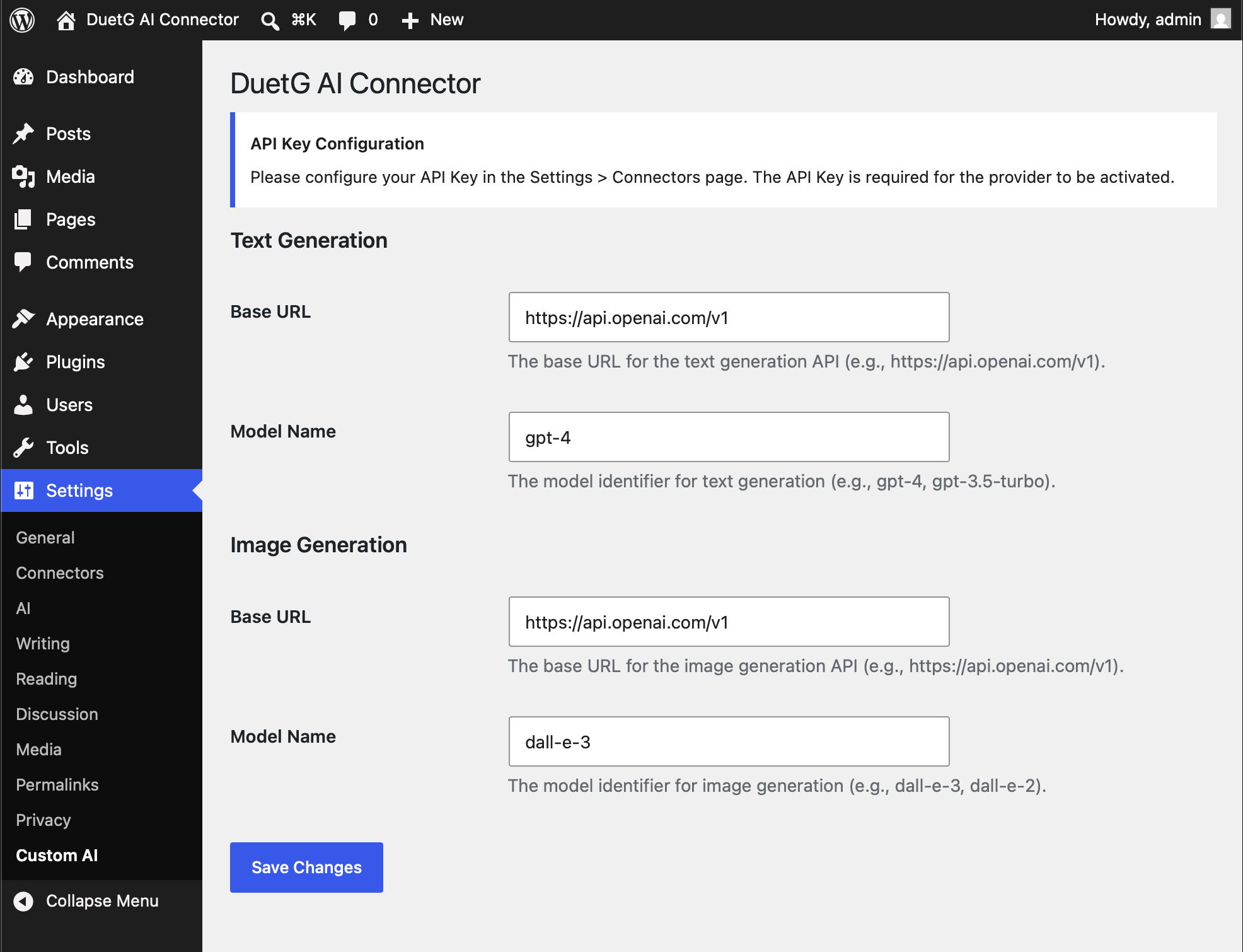

- Go to Settings > Custom AI to configure your Base URL and model

- (Optional) Go to Tools > Test AI to verify your configuration

屏幕截图:

常见问题:

如何启用调试日志?

To enable debug logging, add the following to your wp-config.php:

define('DUETGAICON_DEBUG', true);

When enabled, debug information will be written to your server's debug log (usually wp-content/debug.log). This includes:

- Request/response details for AI API calls

- Provider registration status

- Model handler information Note: Disable debug logging in production environments to avoid performance impact and log file growth.

此插件在没有 WordPress 7.0 的情况下能用吗?

不需要。此插件需要 WordPress 7.0 或更高版本,因为它使用内置的连接器 API 来管理 API Key。

为什么建议数量和笔记数量有时不匹配?

使用评审笔记时,您可能会注意到 AI 返回的建议数量与编辑器中显示的笔记数量不完全匹配。 This is expected behavior and has two causes:

- Multi-category suggestions: Some AI models return a single suggestion that applies to multiple review categories (e.g.,

review_type: "seo, accessibility"). The plugin preserves these as-is, so one suggestion may appear under multiple note categories in WordPress AI Client. - Model response format: The AI model controls the number of suggestions it returns, and WordPress AI Client determines how to display and categorize them. The plugin correctly forwards the model's response without modifying the count.

如何找到我的 AI 提供商的 Base URL?

- Ollama (local):

http://localhost:11434/v1 - LM Studio (local):

http://localhost:1234/v1 - MiniMax:

https://api.minimax.io/v1 - Moonshot:

https://api.moonshot.ai/v1 - DeepSeek:

https://api.deepseek.com/v1 - SiliconFlow:

https://api.siliconflow.cn/v1 - 其他提供商:请查阅其文档

需要 API Key 吗?

部分提供商需要 API Key。对于不需要身份验证的本地安装(如 Ollama),您可以输入任意字符串(如 "not-required")作为 API Key。

为什么本地推理/思考模型有时会超时?

在 Ollama 上运行的本地推理模型(如 Gemma 4、QwQ 等)会在生成最终答案之前产生长的"思考"链。这个过程可能需要 30-60 秒或更长时间,可能触发 cURL 的低速限制超时(默认 30 秒)。 Cloud models generally work well - most cloud API providers (DeepSeek, MiniMax, Moonshot, etc.) respond quickly without timeout issues. If a cloud model frequently times out, it may have unusually long thinking chains - try switching to a different model. Recommended solutions for local models:

- Use non-reasoning models for local AI features. For Ollama, models like

qwen2.5:7b,llama3.2:3b, orphi3work well without the timeout issue. - Configure Ollama to keep models loaded:

bash export OLLAMA_KEEP_ALIVE=-1 # Keep model in memory

如何使用本地 AI 提供商(如 Ollama 或 LM Studio)?

By default, WordPress blocks requests to localhost and private IP addresses for security (SSRF protection). If you're using a local AI provider, you can disable this protection by adding to your wp-config.php:

define('DUETGAICON_ALLOW_LOCAL_URLS', true);

Warning: Disabling SSRF protection allows requests to private/local IPs. Only enable this if you trust your local AI provider and your server is not directly accessible from the internet.

此设置在使用本地 AI 提供商时同时适用于文本模型和图片模型。

Tip: When DUETGAICON_ALLOW_LOCAL_URLS is enabled, a Network Connectivity Test tool appears on the Test AI page (Tools > Test AI). You can use it to verify that your WordPress server can reach your local AI provider before running actual AI feature tests. This is especially useful for debugging connection issues with local Ollama or LM Studio installations.

如何在代码中使用?

use WordPress\AiClient\AiClient; $registry = AiClient::defaultRegistry(); // Text Generation $model = $registry->getProviderModel('custom_text', 'gpt-4'); $result = $model->generateTextResult([ new \WordPress\AiClient\Messages\DTO\UserMessage([ new \WordPress\AiClient\Messages\DTO\MessagePart('Your prompt here') ]) ]); echo $result->toText(); // Image Generation $model = $registry->getProviderModel('custom_image', 'dall-e-3'); $result = $model->generateImageResult([ new \WordPress\AiClient\Messages\DTO\UserMessage([ new \WordPress\AiClient\Messages\DTO\MessagePart('Your prompt here') ]) ]); $files = $result->toImageFiles();

更新日志:

- 添加 isLocalUrl() 帮助方法,用于检测 localhost/私有 IP URL

- 当配置了本地 AI URL 但 DUETGAICON_ALLOW_LOCAL_URLS 禁用时,添加明确的错误提示

- 修复 PHP 语法错误(TestPage.php 中多余的 );)

- 修复 PHPCS:使用 wp_kses_post 输出错误以支持 HTML 格式

- 修复 PHPCS:在国际化友好的错误消息中使用 sprintf 及 translators 注释

- 网络连接测试按钮现使用 primary 样式

- 将重复的本地 URL 检测代码重构为 Helper::isLocalUrl()

- 插件重命名为 DuetG AI Connector(原 Custom AI Provider)

- 添加强能内容分类功能(AI 驱动的文章标签和分类建议)

- 添加元描述生成功能

- 添加 WordPress 连接器注册表集成,以兼容 WordPress 7.0+

- 为 MiniMax、Kimi、GLM 和腾讯混元添加多提供商响应格式标准化

- 为 DashScope(qwen/glm)模型添加自动 JSON 关键字注入

- 改进 JSON 提取,使用平衡大括号计数替代非贪婪正则

- 添加带 URL sanitization 的详细调试日志

- 在测试 AI 页面添加网络连接测试功能,用于调试本地 Ollama 连接

- 扩展 DUETGAICON_ALLOW_LOCAL_URLS 至整个插件(文本和图片模型)

- 添加说明本地推理模型超时问题的 FAQ 版块

- 从文档中移除 WordPress AI 版本要求

- 清理 CustomTextGenerationModel 中的调试日志代码

- 修复 PHPCS:在表单字段使用前对 test_url 进行 sanitization

- 当 DUETGAICON_ALLOW_LOCAL_URLS 禁用时,网络连接测试现为隐藏状态

- 修复网络连接测试按钮样式(现使用 primary 样式)

- 在本地 AI 提供商 FAQ 中添加网络连接测试文档

- 修复 TestPage.php 中图片 URL 的 OutputNotEscaped 错误

- 修复 Settings.php 中命名空间声明顺序(移至 ABSPATH 检查之前)

- 修复 JS 文件版本号以使用 JS 文件自身的 mtime 而非 plugin.php

- 为 custom_ai_debug() 添加 function_exists() 包装以防止冲突

- 修复 ThinkingTagHelper 中重复的 docblock 注释

- 修复 CustomImageGenerationModel 注释中的拼写错误("if not setting" → "if not set")

- 在调试日志中添加 URL sanitization 以过滤敏感参数

- 将 JSON 正则从贪婪匹配改为非贪婪匹配以提升准确性

- 修复 TestPage.php 中 dirname 层级 Bug(dirname level 3 → 2)

- 修复调试日志中 array_map 键保留 Bug

- 内联 URL sanitization 逻辑以避免嵌套函数定义

- 修复 wp_enqueue_script() 中缺失的资源版本号

- 修复测试页面中未转义的输出

- 为 Settings.php 添加直接文件访问保护

- 为图片 URL 添加 SSRF 保护(默认阻止 localhost/私有 IP)

- 添加 DUETGAICON_ALLOW_LOCAL_URLS 常量,以便在需要时启用本地图片 URL

- 更新至 WordPress AI 插件(移除"实验性"品牌)

- 添加与 WordPress AI 插件(0.6.0+)的兼容性

- 添加 Alt Text 生成支持(需要 VLM 模型)

- 添加图片提示词生成支持

- 添加评审笔记功能

- 添加标题生成支持

- 添加内容摘要支持

- 添加摘要生成支持

- 为 DeepSeek、Qwen、MiniMax、Kimi 等模型添加思考/推理支持

- 改进 JSON 响应解析,提升兼容性

- 添加调试日志(通过 WP_DEBUG 控制)

- 首次发布

- 支持文本生成

- 支持图片生成